Finally know what your team

is building with ChatGPT.

Score every GPT and Project. Surface risks. Map workflow coverage. Detect where AI demand is concentrating across your org. Every sync ends with a board-ready executive briefing.

See it in action

Try the demo. No API key, no signup. Or explore the screens below.

The data is there. The intelligence isn't.

OpenAI gives enterprise admins full visibility into their workspace. But visibility isn't intelligence, and right now, most leaders are flying blind.

No inventory

Your OpenAI admin console shows you what exists. Not what's dangerous, redundant, or wasted. Hundreds of GPTs and Projects built across the org, with no risk assessment and no quality signal.

No patterns

You don't know who your best AI builders are. Or who needs training. Or which GPTs are doing the same thing. Or which workflows have no AI coverage at all. The data exists. It's just unexplored.

No measurement

What is your ChatGPT Enterprise investment actually producing? Without this, ChatGPT Enterprise is a cost center that can't justify renewal.

Everything leaders need. In one place.

Every Custom GPT and Project in your workspace: discovered, scored, and acted on.

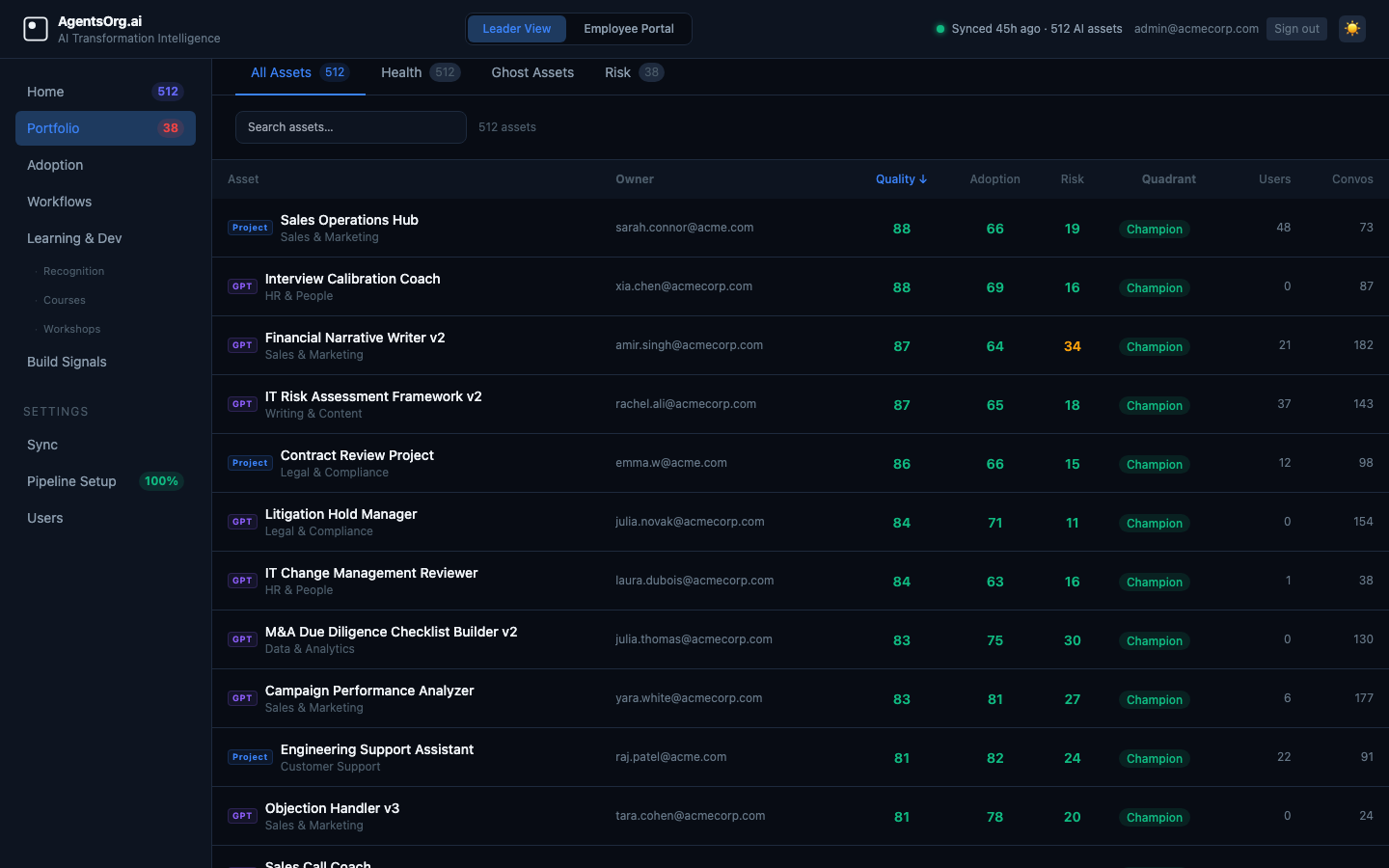

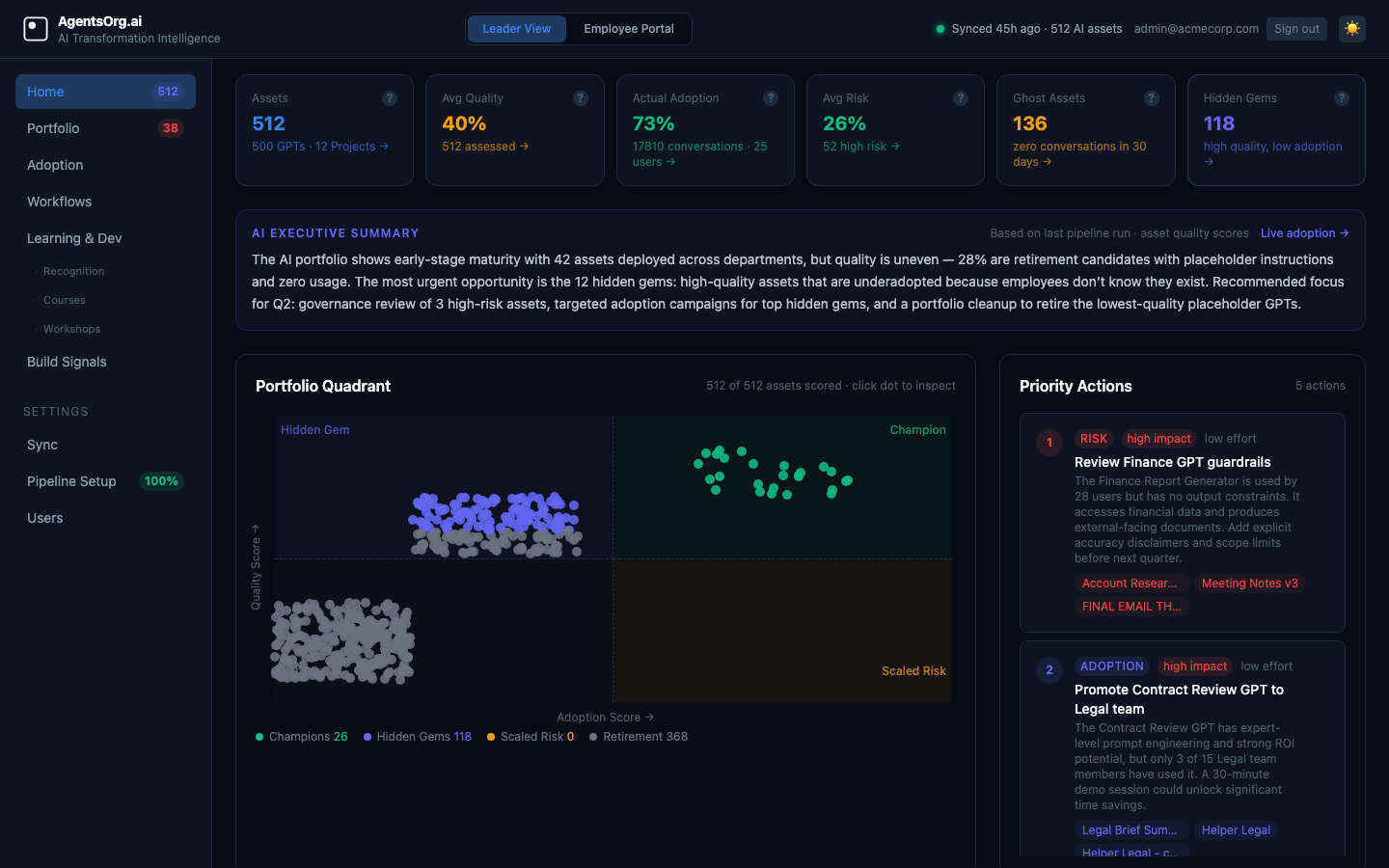

Quality × Adoption Quadrant + AI Priority Actions

Every scored GPT lands in one of four quadrants: Champion (high quality, high adoption: scale these), Hidden Gem (high quality, low adoption: promote these), Scaled Risk (low quality, high adoption: fix these now), Retirement Candidate (low quality, low adoption: decommission). After every pipeline run, an LLM generates 5-10 ranked priority actions and a board-ready executive summary paragraph, each action tied to specific GPTs with impact, effort, and reasoning.

High quality · High adoption. Scale and certify.

High quality · Low adoption. Promote and surface.

Low quality · High adoption. Fix before it spreads.

Low quality · Low adoption. Decommission.

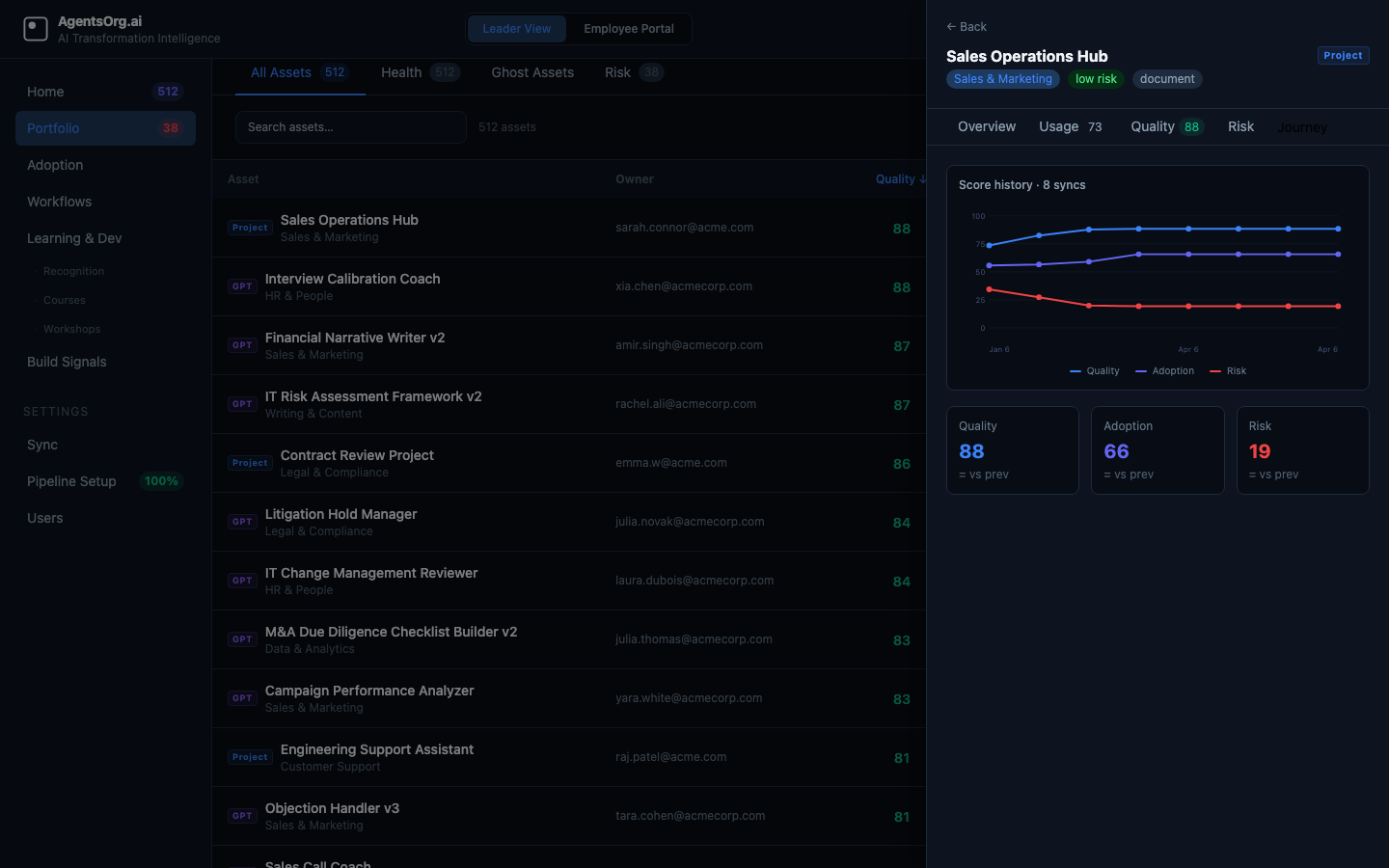

Scored. Ranked. Historically tracked.

Every asset gets composite quality, adoption, and risk scores (0–100). Tabbed views: all assets, ghost assets (zero usage), risk flags. The drawer "Journey" tab shows how each GPT's scores have changed across every sync. Portfolio health trend tracks whether your AI investment is improving over time.

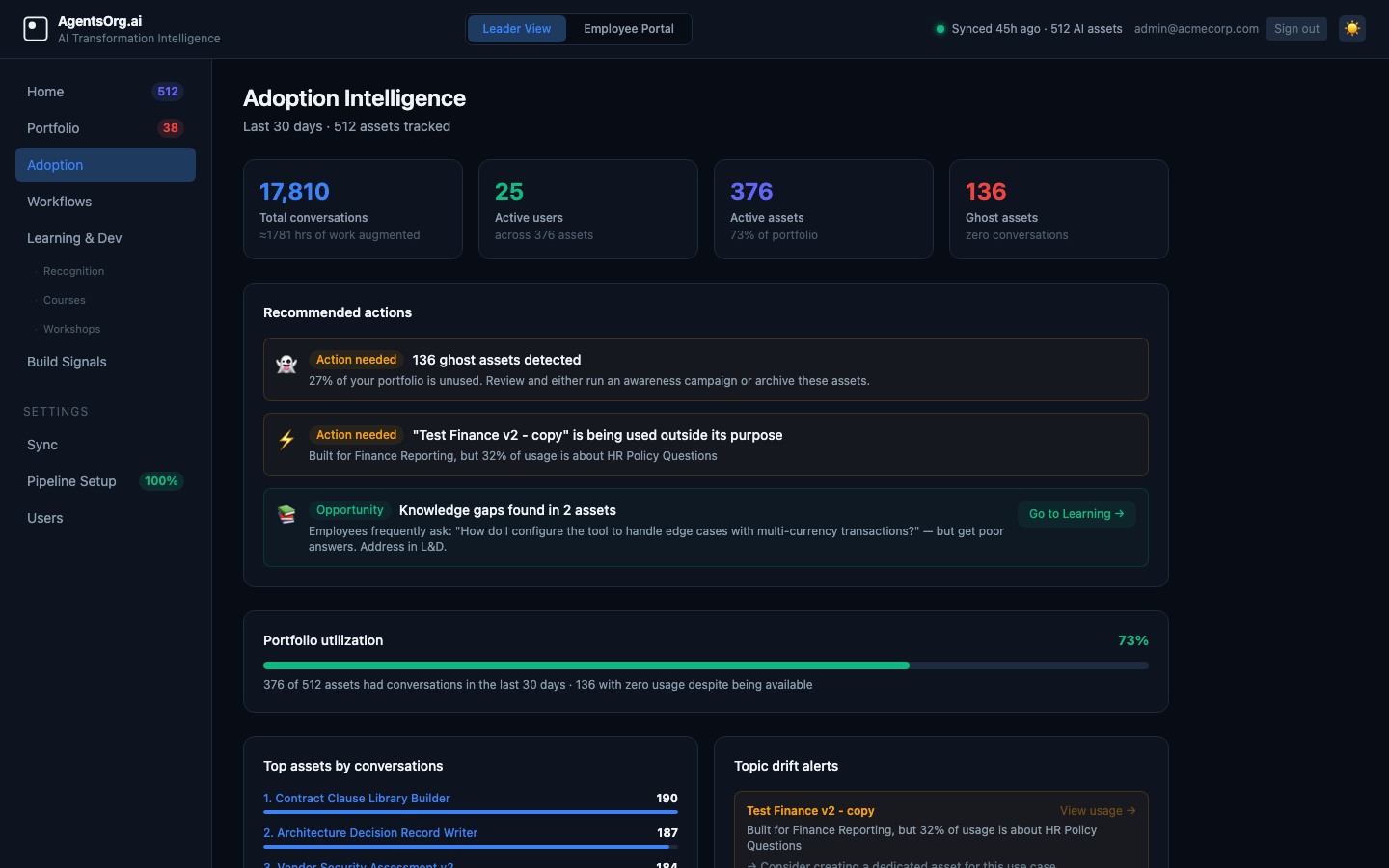

Conversation intelligence.

Syncs the conversation JSONL export from OpenAI to answer: which GPTs are actually being used, by whom, and for what topics. Conversations are attributed back to the originating GPT or Project via the conversation pipeline, giving each asset its own usage fingerprint. Surfaces ghost assets (built but unused), drift alerts (usage patterns shifting), knowledge gaps (conversation topics with no asset match), and per-user adoption scores with role fit analysis.

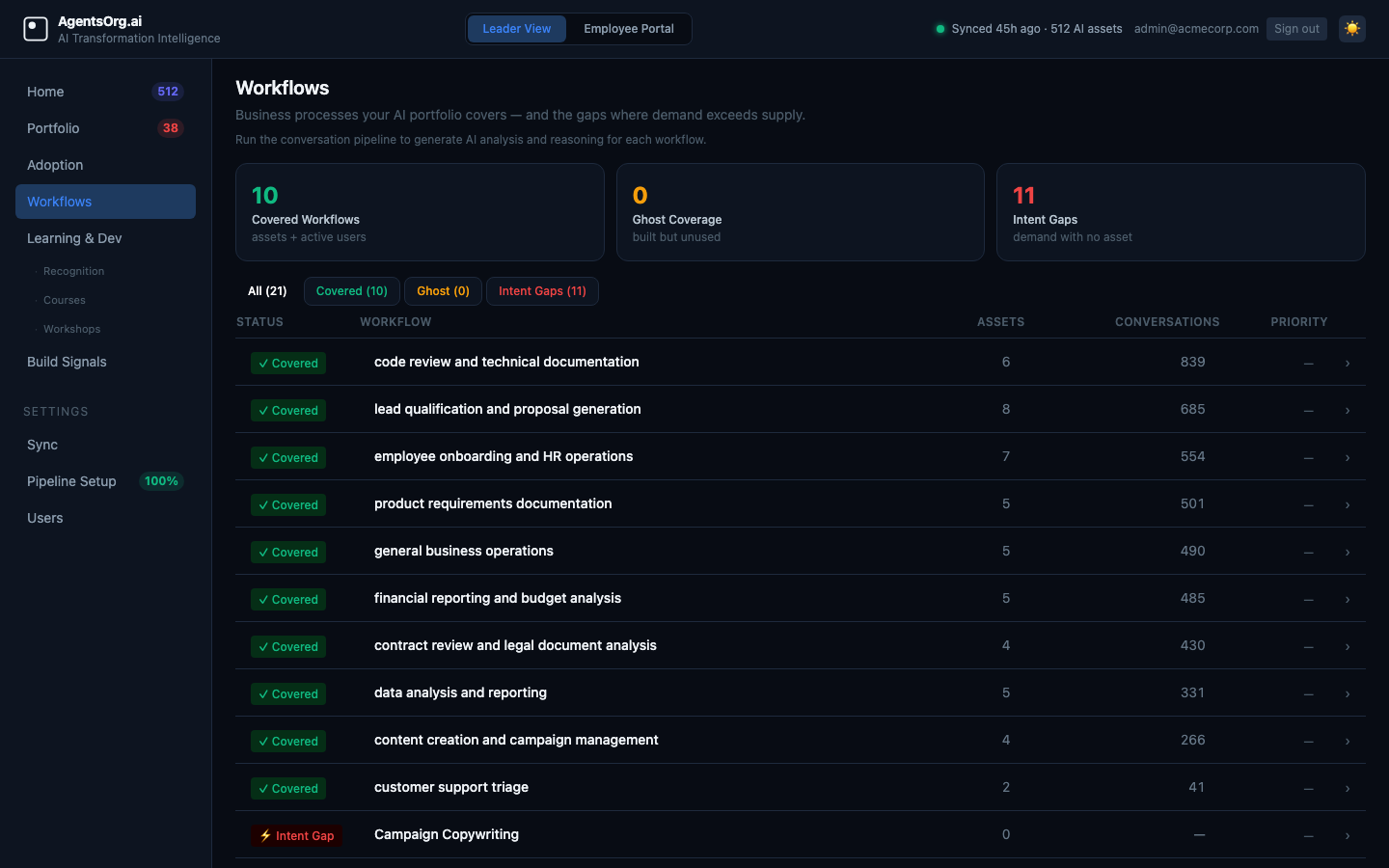

Business process coverage analysis.

Maps every conversation and GPT to the business processes they touch. After each sync, the platform categorizes every workflow into one of three states and generates LLM reasoning and a recommended action for each.

Assets built + active conversations. Healthy. Monitor for drift.

Assets built, but zero conversation activity. Nobody's using it.

Conversation demand exists, but no dedicated asset. Build this next.

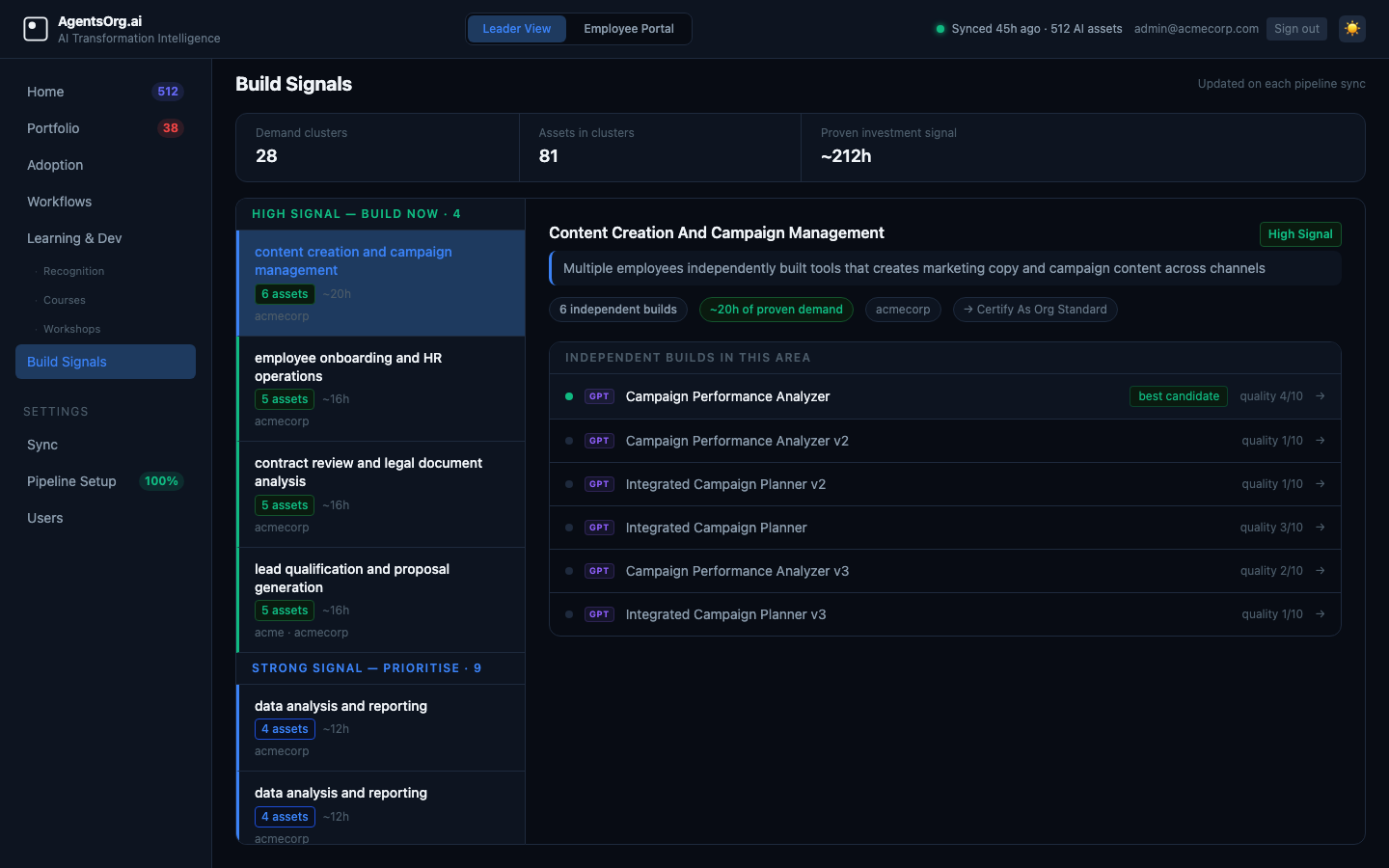

Proven demand. Not just redundancy.

Semantic clustering (pgvector + centroid algorithm + LLM validation) detects when multiple teams independently built GPTs for the same workflow. That convergence is a strong demand signal. Surfaces the best-candidate asset to certify as an org standard, with estimated hours saved by consolidation.

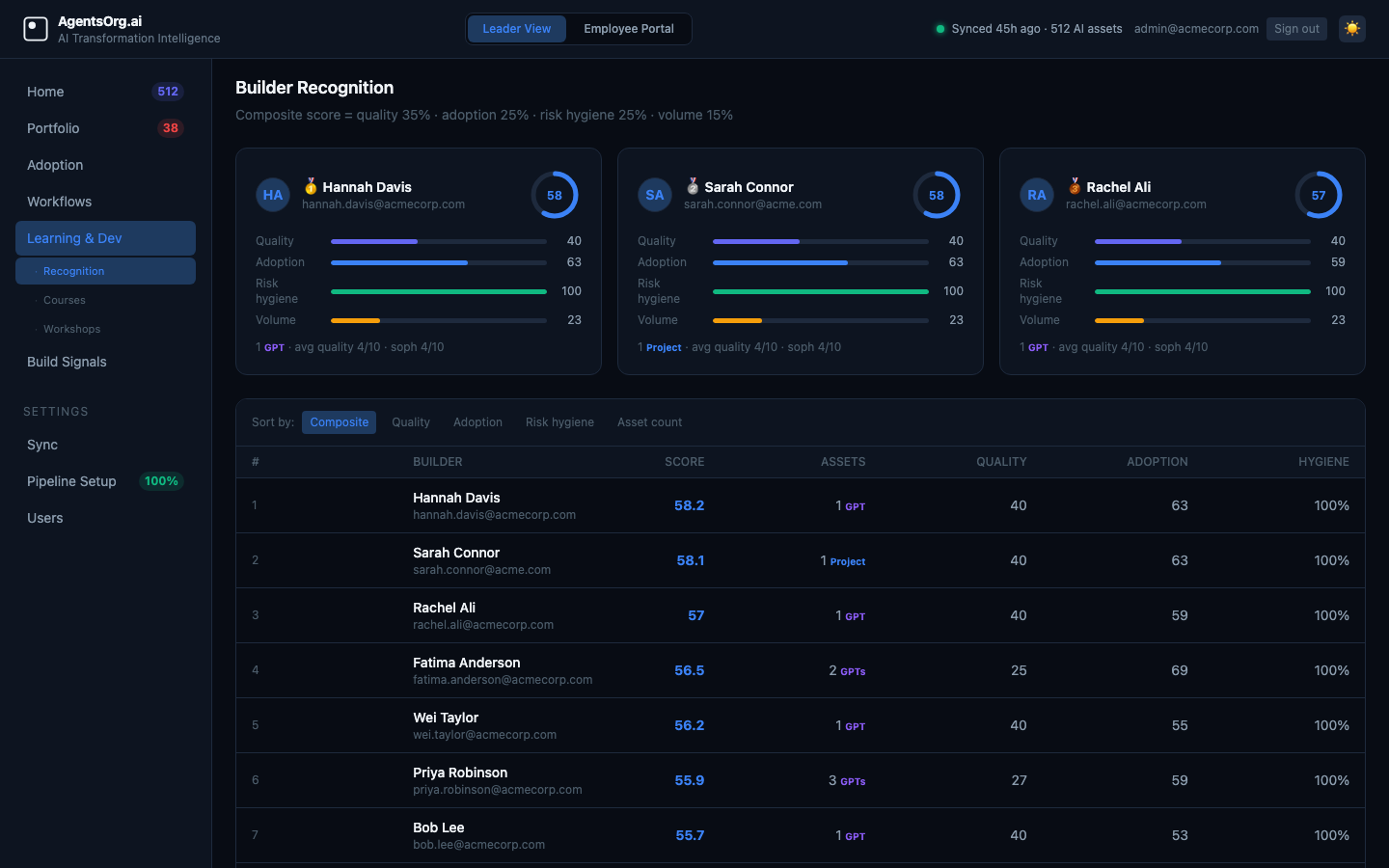

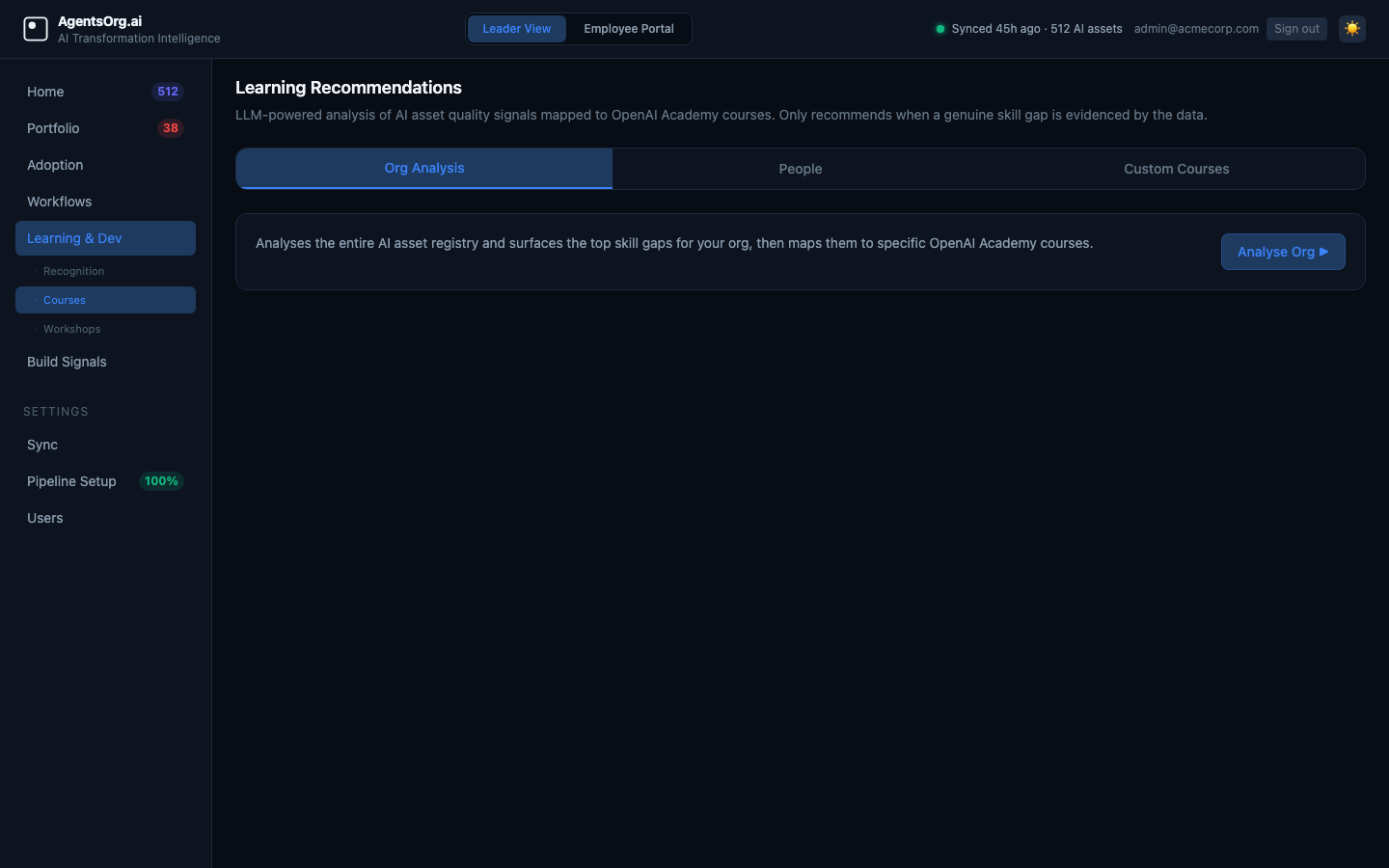

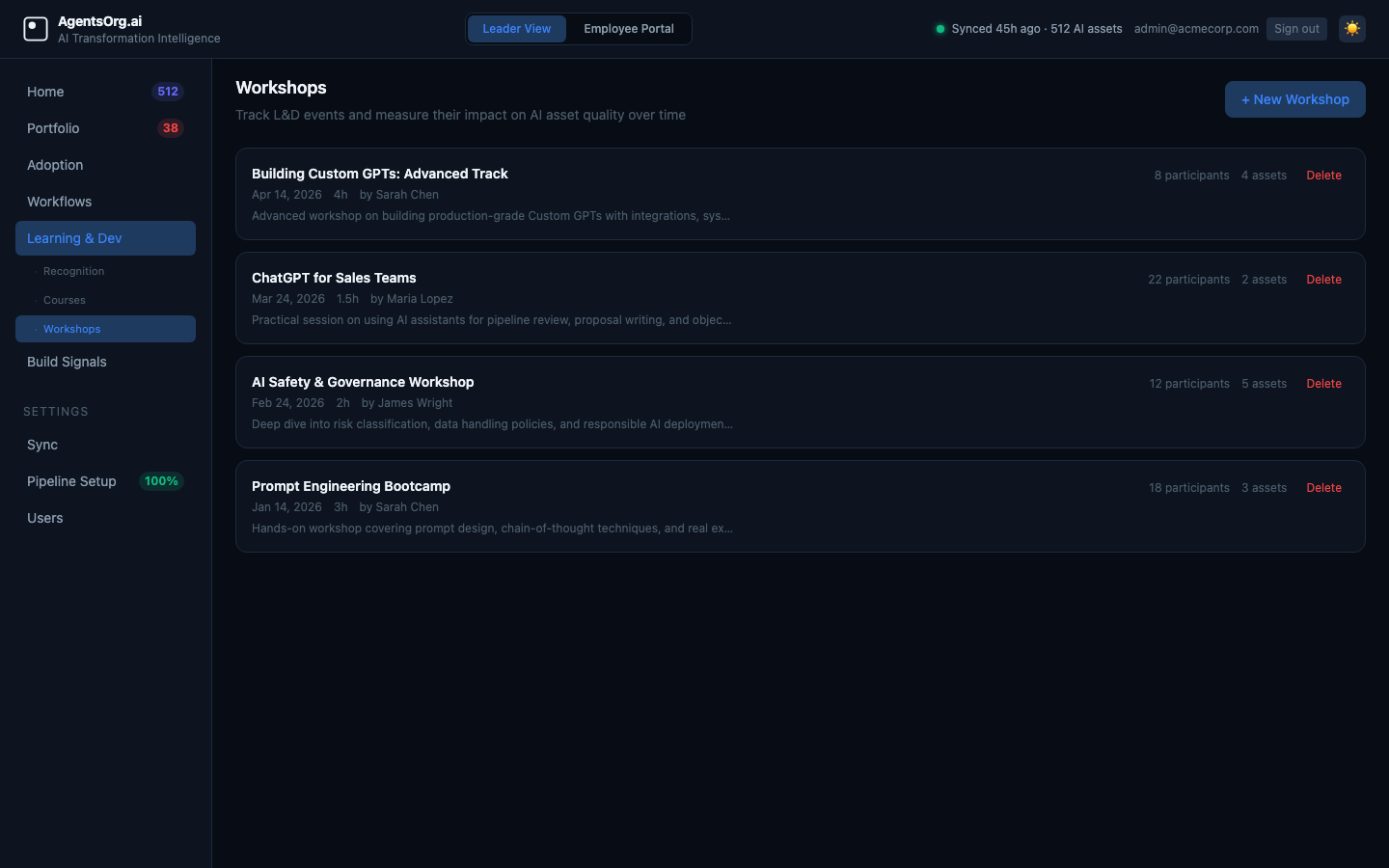

Recognition. Courses. Workshops.

Recognition: composite builder scores across quality, adoption, hygiene, and volume. Know who to promote and who to support. Learning: LLM-recommended courses per builder, grounded in actual skill gaps in their GPT output, not a generic catalog. Workshops: track sessions, participants, GPT tagging, and measure quality improvement before vs after.

SSO · TOTP MFA · Role-gated access · Encrypted secrets

Okta, Entra ID, Google. Enforce SSO org-wide — non-admins can't bypass it.

Two-step login with any TOTP app. Secrets Fernet-encrypted at rest.

Employees see the portal. Only AI leaders see org intelligence and pipeline data.

API keys, OIDC client secrets, MFA secrets — all Fernet-encrypted. Key rotation endpoint included.

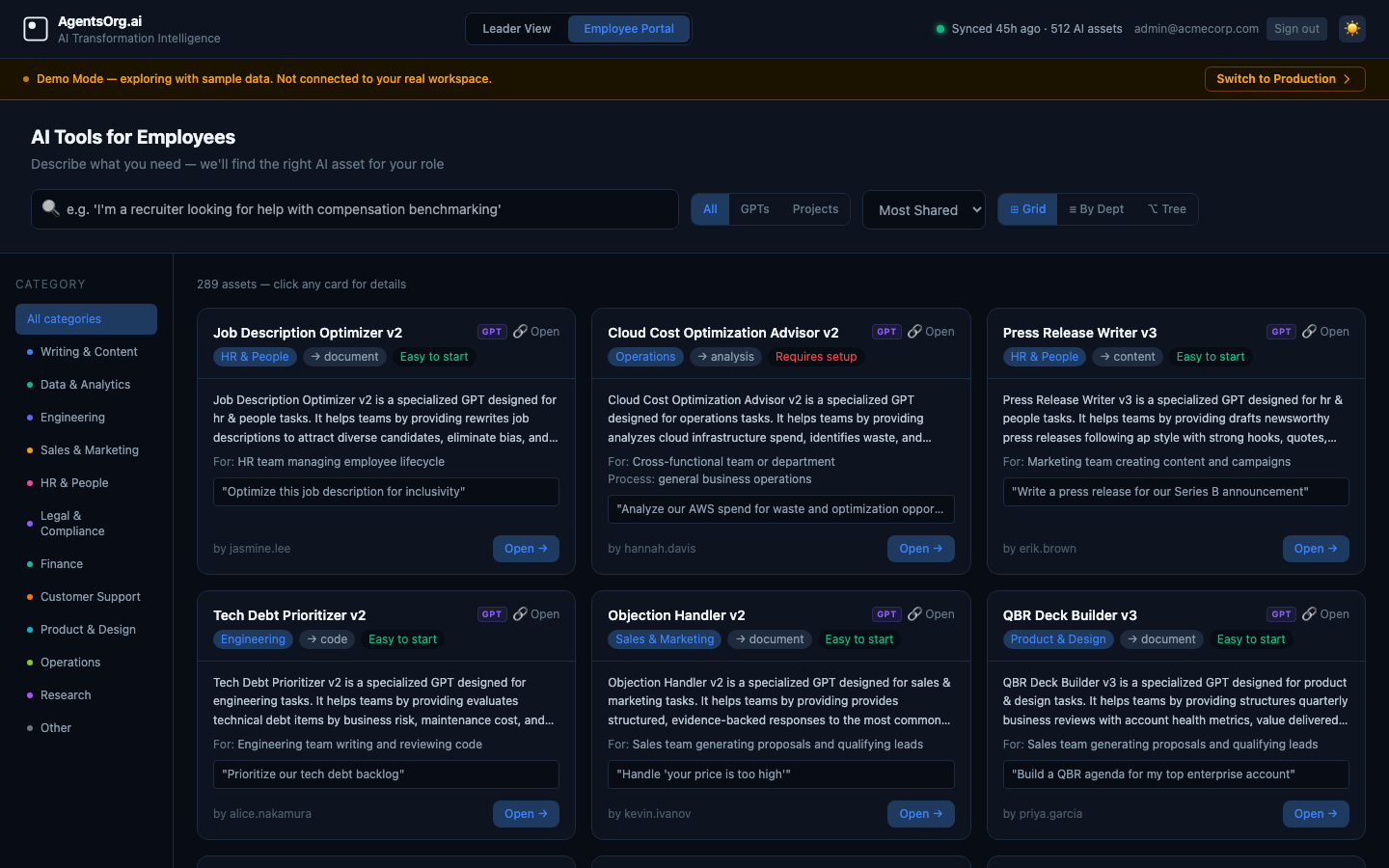

Read-only GPT and Project discovery for every employee. Search by role, category, or business need. Check if a certified solution already exists for your workflow before you build something new.

Built for a specific context

AgentsOrg is purpose-built for one environment. That focus is a feature.

- CIOs and AI transformation leads managing ChatGPT Enterprise

- AI Center of Excellence teams needing portfolio visibility

- Platform owners responsible for AI governance and risk

- Digital transformation leaders building the internal AI standard

- OpenAI API developers building custom AI applications

- Individual ChatGPT users or non-Enterprise plans

- Agent builders looking for a no-code automation tool

- Multi-platform governance across Claude, Gemini, and ChatGPT today (Claude for Business and Gemini for Workspace support are on the roadmap)

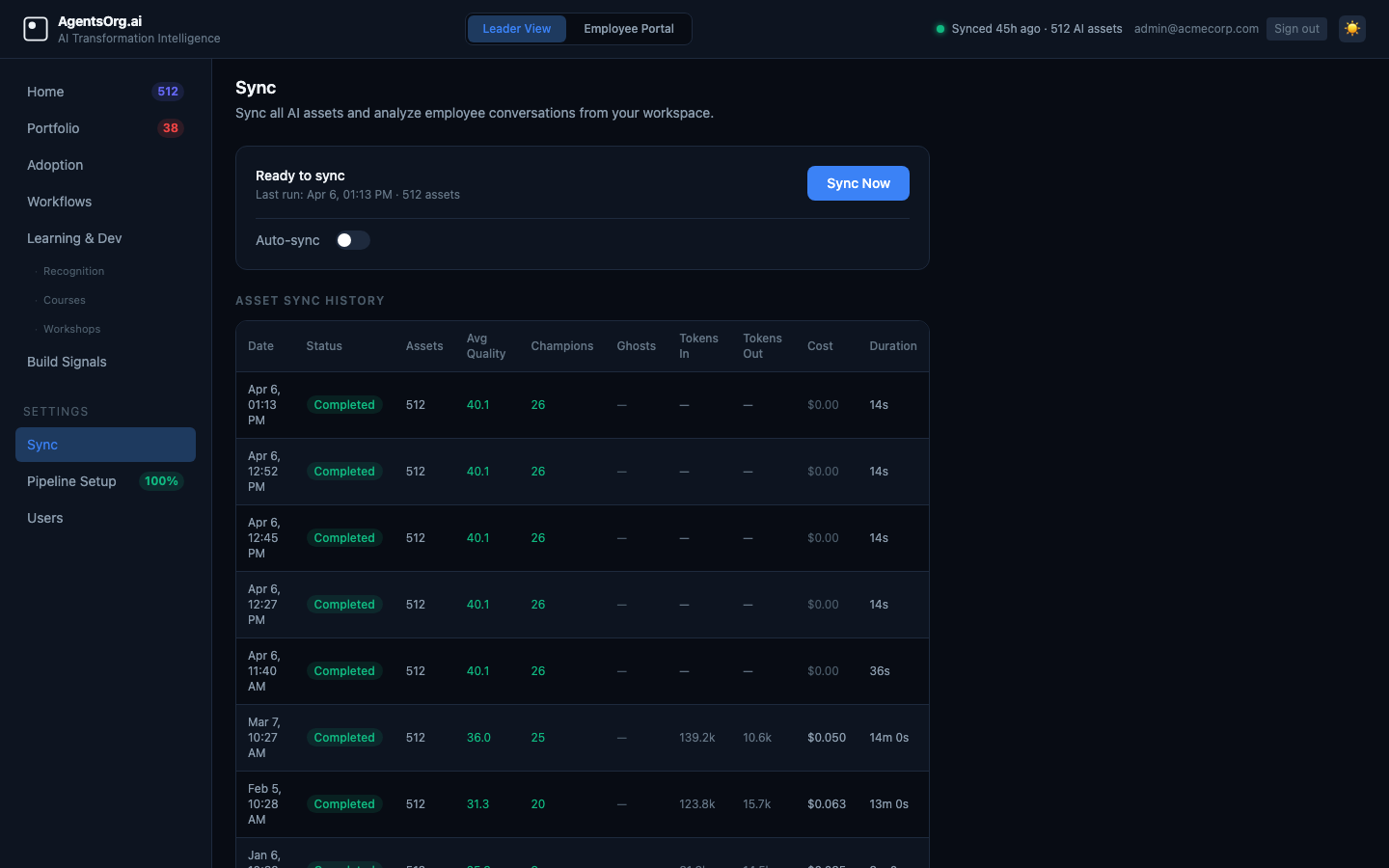

Up and running in minutes

Self-hosted. One command. Your data stays on your infrastructure.

Clone & deploy

Your IT team deploys in ~5 minutes. Three Docker containers: Postgres, FastAPI backend, React frontend. Everything wired up.

Try demo mode first

No API key needed. Switch on Demo mode to explore the full platform: quality and adoption scoring, conversation intelligence, workflow coverage analysis, build signals, builder recognition. Includes 500+ realistic GPTs. Connect your real workspace when you're ready.

Run the pipelines

Three pipelines run automatically. Asset pipeline: Fetch → Filter → Classify → Enrich → Score → Recommendations → Store. Conversation pipeline: Fetch JSONL → Aggregate → Topic Analysis → User Analysis → Workflow Intelligence. Clustering pipeline: Semantic similarity → LLM validation → Demand signal detection. Every run ends with a board-ready executive summary and 5–10 ranked priority actions.

Act on intelligence

The Home page opens with your AI executive summary and ranked priority actions. The quadrant shows exactly which GPTs to scale, promote, fix, or retire. Workflows shows where AI coverage is missing. Build Signals shows which tools to certify as org standards. The Employee Portal gives the whole org a place to discover what already exists.

From GPT & Project governance to full AI intelligence

Each phase answers a bigger question about your org's AI investment.

What are we building?

Is it safe? Who's using it?

Custom GPTs · Projects · Conversations · Users

- Full GPT & Project portfolio discovery

- Risk flagging & compliance scoring

- Quality, adoption & risk composite scores (0–100)

- Quality × Adoption quadrant (Champion / Hidden Gem / Scaled Risk / Retirement)

- LLM-generated priority actions + executive summary

- Standardization opportunity detection (Build Signals)

- Builder recognition & scoring

- AI-powered L&D recommendations

- Workshop tracking & impact measurement

- Conversation intelligence (ghost assets, drift, knowledge gaps)

- Per-user adoption scoring & role fit analysis

- Workflow coverage analysis (covered / ghost / intent gaps)

- Portfolio health trend over time

- Per-asset score history

- SSO via OIDC (Okta, Entra ID, Google) with hard enforcement mode

- TOTP MFA (two-step login with authenticator app)

- Role-gated analytics (employees blocked from org intelligence)

- Demo mode (no API key needed)

Deeper measurement.

Automated reporting.

Cost · Compliance · Reports

- Cost attribution per GPT (UI, data already collected)

- Department & role benchmarking heatmaps

- Automated portfolio health reports (PDF / Slack / email)

- Compliance log analysis

- GPT lifecycle tracking (created → active → retired)

What's the full footprint

of AI work in our org?

Automations · Canvases · Memories · Recordings · Codex

- Automation governance & audit

- Canvas knowledge mapping

- Memory footprint analysis

- Recording transcript intelligence

- Codex task analytics

- Cross-source AI maturity score

- Executive board-ready reporting

One governance layer

across all enterprise AI.

Claude for Business · Gemini for Workspace

- Claude for Business portfolio discovery

- Google Gemini for Workspace governance

- Cross-platform risk scoring

- Unified builder profiles across tools

- Cross-platform workflow coverage map

- Org-wide AI maturity score (all platforms)

FAQ

What AI platforms does AgentsOrg support?

ChatGPT Enterprise and ChatGPT Team today, via OpenAI's Compliance API. Claude for Business (Anthropic) and Google Gemini for Workspace are on the roadmap. AgentsOrg started with ChatGPT Enterprise because OpenAI's Compliance API is the most mature enterprise data export available, but the governance problem applies across all enterprise AI platforms.

Is this affiliated with OpenAI?

No. Independent open-source project. Integrates with OpenAI's Compliance API, GPT API, and Embeddings API. Not endorsed by OpenAI.

Does my data leave my infrastructure?

Never. Fully self-hosted on your own PostgreSQL instance. API keys and OIDC secrets are Fernet-encrypted at rest. TOTP MFA secrets are encrypted before storage. Session cookies are HttpOnly + SameSite. Bearer tokens are restricted to API token type only — browser sessions cannot be replayed as API tokens.

Is this production-ready?

Yes. The full platform is live today: Custom GPT & Project portfolio discovery, risk flagging, standardization opportunity detection, quality and adoption scoring, builder recognition, L&D recommendations, conversation intelligence (ghost asset detection, per-user adoption insights, knowledge gap signals), workflow coverage analysis, and LLM-generated priority actions with a board-ready executive summary. Auth and security hardening is also complete: SSO via OIDC with hard enforcement, TOTP MFA, role-gated analytics, Fernet-encrypted secrets, and bearer token restrictions. Demo mode lets you explore everything without an API key.

How is this different from the OpenAI admin console?

The admin console is for managing users. AgentsOrg is for understanding what all those users are building: whether it's safe, redundant, worth the investment, and where AI demand is concentrating across the org. Different purpose entirely.

What's the ROI? How do I justify this to my CFO?

Three concrete levers: (1) Standardization of validated demand: when 6 teams independently build the same meeting-summary GPT, that is proof a shared certified solution should exist. One org standard replaces six fragmented rebuilds. (2) Risk prevention: one ungoverned GPT exposing sensitive data costs far more than the tool. Catching it early is the cheapest governance win available. (3) Targeted enablement: L&D spend goes to builders who actually need it, grounded in real skill gaps in their GPT output, not a blanket course rollout.

AI Transformation Advisors

Use AgentsOrg as your client assessment toolkit. Run a GPT & Project portfolio audit in minutes. Get listed in our directory of 50+ advisors.

See your GPT & Project portfolio in 5 minutes.

Live demo. No install. No API key. No signup.